Efficient Attention: Breaking The Quadratic Transformer Bottleneck

Discussion of removing a major architectural limitation in Transformer neural networks: the length of the input it can look at. Beyond a few thousand inputs, the resource requirements explode quadratically, rendering it infeasible to encode raw text at the character level, much less use entire books, images, or many other kinds of data which could be useful. Even for text, this inability also forces limitations like the use of BPE text encoding (responsible for sabotaging GPT-3’s rhyming, among other things), forgetfulness, limits to prompt programming, and inability to write coherent long texts.

A bibliography of possibilities for fixing this are organized hierarchically below:

adding state, through recurrence (a memory) or creating a compressed history/state as an explicit summary

tinkering with matrix algebra to remove the quadratic explosion while still keeping more or less the same self-attention mechanism

approximating self-attention: using attention on only a small subset of tokens at any time (dodging the quadratic limit), or using a mix of local and global attention (local attentions to do most of the work, and global attention on top of the local attentions, each one avoiding the quadratic by considering only a few inputs at a time)

miscellaneous tricks: removing parts, using only randomized untrainable components (with no need to compute gradients over) etc

One of the most frustrating limitations of GPT-3 (as awesome as it is) is the context window: 2048 text tokens (BPEs) is adequate for many text-related tasks, and even GPT-3’s performance on that window is far from perfect, indicating it has a long way to go in truly understanding text. But 2048 BPEs runs out fast when you start prompt programming something hard, hacks like BPEs have nasty & subtle side-effects, and (as iGPT/ViT indicate in their own ways) is totally inadequate for other modalities like images—a single small 256px image is already equivalent to a sequence of l = 65,536, never mind video or raw audio!

How do we get future Transformers with reasonable context windows and/or memory, which we can use for research papers, books, structured text, images, video, audio, point clouds, genomics, and so on, where we need to handle sequences with lengths in the millions? (Such improvements would permit not just doing things GPT-3 struggles to do, like write coherent novels, but many better architectures, like multimodal Transformers which can learn jointly from images & text, accessing image-based datasets like PDFs, and learning far more accurate human-like representations & tacit knowledge with less data & smaller models, providing large models useful for almost all conceivable tasks—especially robotics.)

Below I compile & categorize research on breaking the dense attention quadratic bottleneck (overviews: Lilian Weng, Madison May; review: Tay et al 2020; benchmark suite: Long Range Arena1):

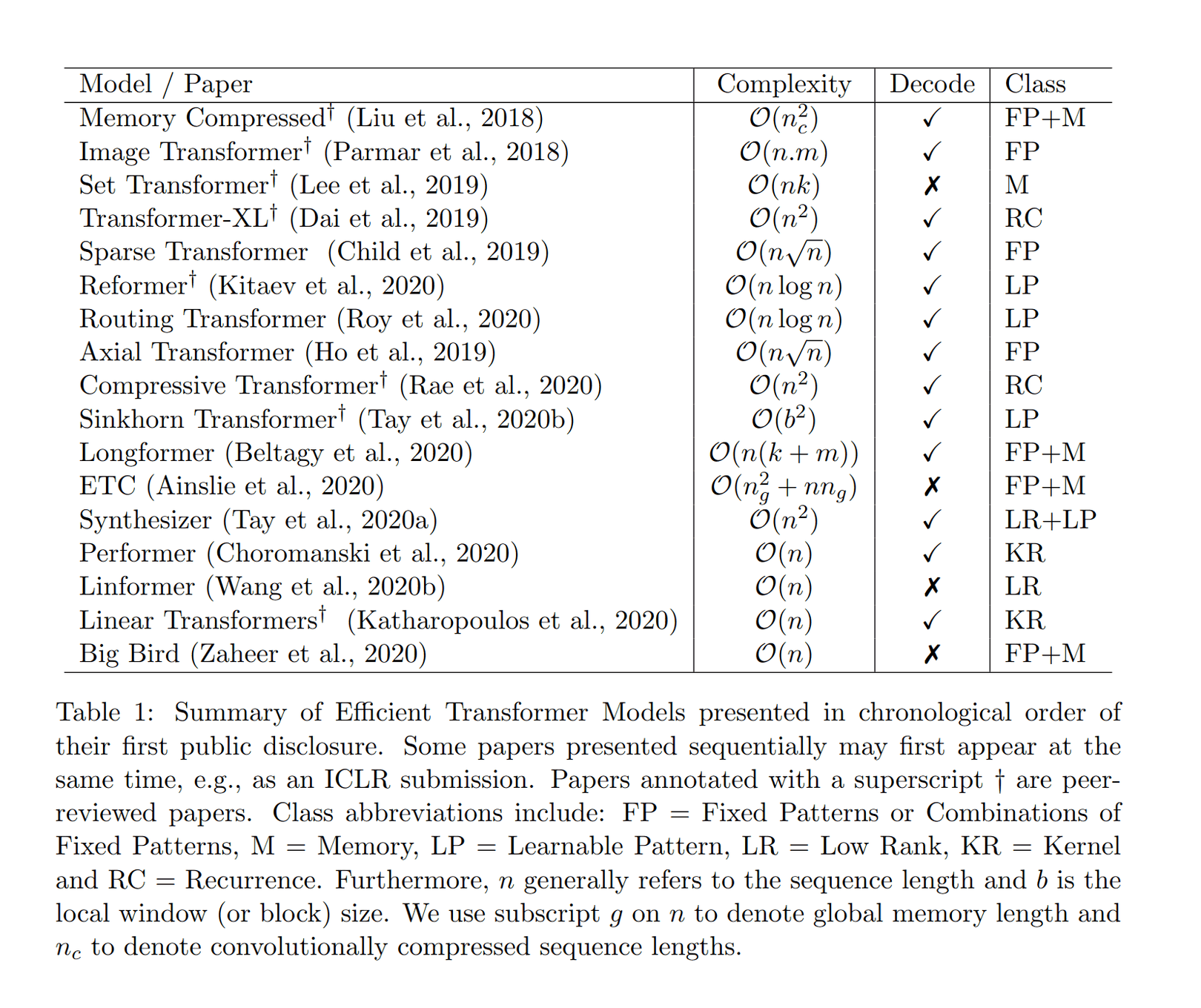

Table 1: Summary of Efficient Transformer Models presented in chronological order of their first public disclosure (Tay et al 2020)

The summary as of mid-2023: dense Transformers remain surprisingly competitive, and the many proposed variants all have their own drawbacks; none have superseded standard GPT or T5-style Transformers in more than a few niches. To paraphase Chekhov: “If many remedies are prescribed for an illness you can be sure it has no cure.”

Efficient Attention

State

Recurrency

“Universal Transformers”, Dehghani et al 2018 (?); “Deep Equilibrium Models”, Bai et al 2019

“Transformer-XL: Attentive Language Models Beyond a Fixed-Length Context”, Dai et al 2019 (blog)

“XLNet: Generalized Autoregressive Pretraining for Language Understanding”, Yang et al 20192

“Untangling tradeoffs between recurrence and self-attention in neural networks”, Kerg et al 2020

“Feedback Transformer: Addressing Some Limitations of Transformers with Feedback Memory”, Fan et al 2020

“Shortformer: Better Language Modeling using Shorter Inputs”, Press et al 2020

“SRU++: When Attention Meets Fast Recurrence: Training Language Models with Reduced Compute”, Lei 2021

“SwishRNN: Simple Recurrence Improves Masked Language Models”, Lei et al 2022

“Block-Recurrent Transformers”, Hutchins et al 2022

RNNs:

Transformer ↔︎ RNN relationship: see Transformer-XL, XLNet, Katharopoulos et al 2020, Yoshida et al 2020, AFT, Lei 2021, Kasai et al 2021, Parisotto & Salakhutdinov 2021, Perceiver, SwishRNN, RWKV

Compressed History/State

“Compressive Transformers for Long-Range Sequence Modeling”, Rae et al 2019; “Expire-Span: Not All Memories are Created Equal: Learning to Forget by Expiring”, Sukhbaatar et al 2021

“Memory Transformer”, Burtsev & Sapunov 2020

“Set Transformer: A Framework for Attention-based Permutation-Invariant Neural Networks”, Lee et al 2018; “Perceiver: General Perception with Iterative Attention”, Jaegle et al 2021a/“Perceiver IO: A General Architecture for Structured Inputs & Outputs”, Jaegle et al 2021b

“Mem2Mem: Learning to Summarize Long Texts with Memory Compression and Transfer”, Park et al 2020

“∞-former: Infinite Memory Transformer”, Martins et al 2021

“Memorizing Transformers”, Wu et al 2021

“ABC: Attention with Bounded-memory Control”, Peng et al 2021

“Recursively Summarizing Books with Human Feedback”, Wu et al 2021

“MeMViT: Memory-Augmented Multiscale Vision Transformer for Efficient Long-Term Video Recognition”, Wu et al 2022

“Token Turing Machines”, Ryoo et al 2022

Matrix Algebra Optimizations

Tricks like rewriting the softmax/dot-product to be linear:

“Efficient Attention: Attention with Linear Complexities”, Shen et al 2018 (blog)

“Linformer: Self-Attention with Linear Complexity”, Wang et al 2020; “Luna: Linear Unified Nested Attention”, et al 2021 (hierarchical?); “Beyond Self-attention: External Attention using Two Linear Layers for Visual Tasks”, Guo et al 2021

“Transformers are RNNs (Linear Transformers): Fast Autoregressive Transformers with Linear Attention”, Katharopoulos et al 2020

“AFT: An Attention Free Transformer”, Zhai et al 2021

“LambdaNetworks: Modeling long-range Interactions without Attention”, Bello 2020

“cosFormer: Rethinking Softmax in Attention”, Qin et al 2022

Approximations

Sparsity

“Image Transformer”, Parmar et al 2018

Sparse Transformer: “Generating Long Sequences with Sparse Transformers”, Child et al 2019 (blog)

“Adaptive Attention Span in Transformers”, Sukhbaatar et al 2019

“Reformer: The Efficient Transformer”, Kitaev et al 2019 (blog: 1, 2); “SMYRF: Efficient Attention using Asymmetric Clustering”, Daras et al 2020; “Cluster-Former: Clustering-based Sparse Transformer for Long-Range Dependency Encoding”, Wang et al 2020; “You Only Sample (Almost) Once: Linear Cost Self-Attention Via Bernoulli Sampling”, Zeng et al 2021

“Star-Transformer”, Guo et al 2019

“Efficient Content-Based Sparse Attention with Routing Transformers”, Roy et al 2020

“Sparse Sinkhorn Attention”, Tay et al 2020 (blog)

“BigBird: Transformers for Longer Sequences”, Zaheer et al 2020 (blog; see also ETC)

Axial attention: “Axial Attention in Multidimensional Transformers”, Ho et al 2019; Huang et al 2018; Wang et al 2020b; Weissenborn et al 20203

“Informer: Beyond Efficient Transformer for Long Sequence Time-Series Forecasting”, Zhou et al 2020

“LogSparse Transformer: Enhancing the Locality and Breaking the Memory Bottleneck of Transformer on Time Series Forecasting”, Li et al 2019

“OmniNet: Omnidirectional Representations from Transformers”, Tay et al 2021

“Combiner: Full Attention Transformer with Sparse Computation Cost”, Ren et al 2021

“Scatterbrain: Unifying Sparse and Low-rank Attention Approximation”, Chen et al 2021

“Sparse Is Enough in Scaling Transformers”, Jaszczur et al 2021

Note: Several implementations are available in DeepSpeed

Global ↔︎ Local Attention

“LSRA: Lite Transformer with Long-Short Range Attention”, Wu et al 2020a

“BlockBERT: Blockwise self-attention for long document understanding”, Qiu et al 2019

“BP-Transformer: Modeling Long-Range Context via Binary Partitioning”, Ye et al 2019

“Longformer: The Long-Document Transformer”, Beltagy et al 2020; “CD-LM: Cross-Document Language Modeling”, Caciularu et al 2021; “Simple Local Attentions Remain Competitive for Long-Context Tasks”, Xiong et al 2021

“ETC: Encoding Long and Structured Data in Transformers”, Ainslie et al 2020; “LongT5: Efficient Text-To-Text Transformer for Long Sequences”, Guo et al 20214

“Conformer: Convolution-augmented Transformer for Speech Recognition”, Gulatti et al 2020 (Zhang et al 2020)

“SMITH: Beyond 512 Tokens: Siamese Multi-depth Transformer-based Hierarchical Encoder for Document Matching”, Yang et al 2020

“Multi-scale Transformer Language Models”, Subramanian et al 2020

“Hierarchical Transformers for Multi-Document Summarization”, Liu & Lapata 2019; “Hi-Transformer: Hierarchical Interactive Transformer for Efficient and Effective Long Document Modeling”, Wu et al 2021

“Transformer-QL: A Step Towards Making Transformer Network Quadratically Large”, Hajra 2020

“Coordination Among Neural Modules Through a Shared Global Workspace”, Goyal et al 2021

“GANSformer: Generative Adversarial Transformers”, Hudson & Zitnick 2021

“Swin Transformer: Hierarchical Vision Transformer using Shifted Windows”, Liu et al 2021a; “Swin Transformer V2: Scaling Up Capacity and Resolution”, Liu et al 2021b

“Hierarchical Transformers Are More Efficient Language Models”, Nawrot et al 2021

“Long-Short Transformer (Transformer-LS): Efficient Transformers for Language and Vision”, Zhu et al 2021

“AdaMRA: Adaptive Multi-Resolution Attention with Linear Complexity”, Zhang et al 2021

“Fastformer: Additive Attention is All You Need”, Wu et al 2021

“FLASH: Transformer Quality in Linear Time”, Hua et al 2022 (see also MLP-Mixer)

“NAT: Neighborhood Attention Transformer”, Hassani et al 2022; “DiNAT: Dilated Neighborhood Attention Transformer”, Hassani & Shi 2022

Miscellaneous

Dropping components, non-trainable/randomized parts, etc.:

“Generating Wikipedia by Summarizing Long Sequences”, Liu et al 2018 (memory compressed)

“Pay Less Attention with Lightweight and Dynamic Convolutions”, Wu et al 2019b

“Music Transformer”, Huang et al 2020

“Synthesizer: Rethinking Self-Attention in Transformer Models”, Tay et al 2020

“Performer (FAVOR): Masked Language Modeling for Proteins via Linearly Scalable Long-Context Transformers”, Choromanski et al 2020a (on turning Transformers into RNNs); “FAVOR+: Rethinking Attention with Performers”, Choromanski et al 2020b (blog; DRL use; can be trained in constant memory); “RFA: Random Feature Attention”, Peng et al 2020; “DPFP: Linear Transformers Are Secretly Fast Weight Memory Systems”, Schlag et al 2021; “DAFT: A Dot Product Attention Free Transformer”, Zhai et al 2021

“Nyströmformer: A Nyström-Based Algorithm for Approximating Self-Attention”, Xiong et al 2021; “Skyformer: Remodel Self-Attention with Gaussian Kernel and Nyström Method”, Chen et al 2021

“Funnel-Transformer: Filtering out Sequential Redundancy for Efficient Language Processing”, Dai et al 2020

“LazyFormer: Self Attention with Lazy Update”, Ying et al 2021

“RASP: Thinking Like Transformers”, Weiss et al 2021 (examining limitations of efficient Transformers: in terms of algorithms, what does going from n2 to n cost? What “programs” do Transformers encode?)

“Stable, Fast and Accurate: Kernelized Attention with Relative Positional Encoding”, Luo et al 2021

“On Learning the Transformer Kernel”, Chowdhury et al 2021

Structured State Models (SSMs): “Combining Recurrent, Convolutional, and Continuous-time Models with Linear State-Space Layers”, Gu et al 2021a; “S4: Efficiently Modeling Long Sequences with Structured State Spaces”, Gu et al 2021b; “HiPPO: Recurrent Memory with Optimal Polynomial Projections”, Gu et al 2021c

“Self-attention Does Not Need 𝒪(n2) Memory”, Rabe & Staats 2021 (does still cost 𝒪(n2) compute)

“How Much Does Attention Actually Attend? Questioning the Importance of Attention in Pretrained Transformers”, Hassid et al 2022

MLPs (for removing attention entirely)

Retrieval

“REALM: Retrieval-Augmented Language Model Pre-Training”, Guu et al 2020

“MARGE: Pre-training via Paraphrasing”, Lewis et al 2020a

“RAG: Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks”, Lewis et al 2020b

“Current Limitations of Language Models: What You Need is Retrieval”, Komatsuzaki 2020

“Memorizing Transformers”, Wu et al 2022

While not directly examining efficient attention mechanisms, “Do Transformer Modifications Transfer Across Implementations and Applications?”, Narang et al 2021, which benchmarks Transformer activations/normalizations/depths/embeddings/weight-tying/architectures, finds that (as often in ML) the gains are smaller than reported & may reflect methodological issues like intensity of hyperparameter tuning & no-free-lunches, and the vanilla Transformer can be heavily hardware-optimized to allow much larger context lengths (eg. FlashAttention). See also “Scaling Laws vs Model Architectures: How does Inductive Bias Influence Scaling?”, Tay et al 2022.↩︎

For comparison, Joe Davison finds XLNet is ~10–16× more parameter-efficient at few-shot learning: XLNet-0.4b ≈ GPT-3-6.7b.↩︎

Speculative inclusion—there may be some way to use the factorization of axial attention, generally intended for multidimensional data like 2D images which can split the full attention into small linear-complexity Height × Width components, on 1D sequences like natural language.↩︎

One question I have about methods which reuse part of the context window for memory: can we do curriculum training, and efficiently train a Transformer normally with a fixed window for most of the training, and then switch over to overloading part of the context as the new memory (Yoshida et al 2020)? That would hypothetically save much of the compute, although one might wonder if the learned algorithms & representations will be inferior compared to a Transformer which was always trained with memory.↩︎